The Illusion of Neutrality: Why the Google Gemini Protest is a Fight for the Human Soul of Software

The modern tech landscape promises a future of seamless connectivity where artificial intelligence acts as a digital companion, easing our cognitive loads and weaving together the fragmented threads of our daily routines into a coherent, optimized tapestry of human progress. We are told that these systems are the ultimate tools for democratization, capable of solving climate change, curing diseases, and fostering a global community through the sheer power of generative synthesis. However, this vision remains a fragile illusion unless we confront the reality that these same tools are algorithmically bound to the priorities of those who fund them, inevitably leading to a loss of agency when the 'neutral' code is repurposed for lethal ends under the guise of national security. As we move deeper into 2026, the friction between the silicon promise and the steel reality of warfare has reached a breaking point, crystallized in an open letter from over 600 Google employees to CEO Sundar Pichai.

Sitting in a crowded cafe last Tuesday, I watched a young man—likely a developer, judging by the sticker-laden laptop—intently refining a prompt for an LLM. He was designing a more efficient logistics chain for a small business. To him, the code was a mundane utility, an anchor keeping his professional life grounded amidst systemic chaos. But zooming out to a macro level, the very architecture he uses to help a local florist could, with a few parameter shifts in a classified setting, become the backbone of a target-identification system. This is the visceral anxiety that has permeated the corridors of Google DeepMind and Cloud divisions. The letter is not just a protest; it is a profound rejection of the atomization that allows a worker to be divorced from the ultimate consequences of their labor.

The Linguistic Battlefield: 'Inhumane' vs. 'Lawful'

Linguistically speaking, the conflict between Google’s staff and the Pentagon is a war of definitions. When the employees use the word 'inhumane' to describe potential military applications of Gemini, they are engaging in a specific kind of discourse analysis. They are not merely using a moralizing adjective; they are attempting to define a boundary for what constitutes 'human' technology. In contrast, the Pentagon’s push for the phrase 'all lawful uses' is a classic example of how language can be used as a cultural anesthetic. 'Lawful' is a systemic term, one that shifts with the political winds and provides an opaque shield against public scrutiny. If the law allows for mass surveillance or autonomous targeting, then the behavior is, by definition, lawful, regardless of its visceral impact on civilians.

Historically, this semantic tug-of-war is deeply rooted in the evolution of the military-industrial-digital complex. Language here acts as an archaeological site, where every new contractual clause reveals layers of shifting power dynamics. By insisting on 'operational flexibility,' the Department of Defense is seeking to turn the multifaceted potential of Gemini into a singular, lethal instrument. The employees, many of whom are experts in philology and computer science, recognize that once the language of a contract is broadened, the ability to enforce ethical safeguards becomes ephemeral. Paradoxically, the more 'flexible' the language, the more rigid and inescapable the harmful applications become.

The Archipelago of the Modern Workforce

Culturally speaking, we often view large tech companies as monoliths, but they are more akin to a society as an archipelago—thousands of individuals living in a densely packed digital ecosystem, yet often feeling completely atomized from the decision-making centers of their 'islands.' This protest is a rare moment where the individual islands have bridged the gap to form a collective voice. The fact that more than 20 directors and vice presidents signed this letter is symptomatic of a deeper structural shift in how tech workers view their habitus. They are no longer content to be passive cogs in a machine; they are asserting their right to shape the ethical trajectory of their creations.

This collective action reminds me of a conversation I had with an anonymized senior researcher who has spent a decade in AI. They described the feeling of 'moral injury'—a term usually reserved for soldiers—when realizing their work on image recognition was being adapted for drone warfare. On an individual level, the researcher felt a profound sense of betrayal. The mundane act of training a model to recognize a 'pedestrian' suddenly carried the weight of a life-or-death decision. Through this lens, the protest isn't just about a contract; it is a coping mechanism for professionals trying to reconcile their personal ethics with the systemic pressures of a multi-billion-dollar defense deal.

From Project Maven to the Gemini Paradox

Behind the scenes of this trend is the haunting memory of 2018. The letter explicitly references Project Maven, the previous attempt to integrate Google’s AI into the Pentagon’s drone program. That successful revolt led to the creation of Google’s AI Principles, a document intended to serve as a moral compass. However, in the context of liquid modernity—a concept pioneered by Zygmunt Bauman to describe our current state of constant change and uncertainty—even the most robust principles can feel transient. De facto, what was considered a 'red line' in 2018 is now being negotiated in 2026 as the 'attention economy' shifts its focus toward national security as the new frontier of profit.

Curiously, the emergence of Anthropic as a foil to Google adds a new layer to this narrative. When CEO Dario Amodei refused the Pentagon’s request for unrestricted access, he shattered the myth that total cooperation is inevitable. His statement that AI can 'undermine, rather than defend, democratic values' in certain cases is a nuanced admission of the technology’s inherent fragility. As a result, the subsequent ban on Anthropic’s tools by the current administration highlights the high stakes of this ethical stand. To put it another way, the 'digital fast-food diet' of easy government contracts is being rejected by some in favor of a more nutritionally dense, albeit financially riskier, ethical stance.

The Hall of Mirrors: Surveillance and the Loss of Civil Liberties

One of the most resonant fears expressed in the letter is the use of Gemini for mass surveillance and individual profiling. From a societal standpoint, we are increasingly living in a hall of mirrors, where our digital footprints are reflected back at us through algorithms that predict—and sometimes dictate—our behavior. In everyday terms, this looks like personalized ads or social media feeds. But when these same tools are applied to 'classified workloads,' the mirrors become one-way glass. The lack of transparency means there is no way to ensure that innocent civilians aren't being profiled based on fragmented data points.

Essentially, the employees are warning against the creation of a ubiquitous surveillance state powered by the very tools they built to help people find information. The irony is not lost on them. This is the paradox of the modern city: we perform our shifting social identities in public and private digital spaces, unaware that the stage itself might be recording our every movement for a 'lethal autonomous' purpose. The 'patchwork quilt' of our lives—our location data, our search history, our private communications—is being stitched together into a target profile without our consent or knowledge.

Reclaiming the Human Narrative

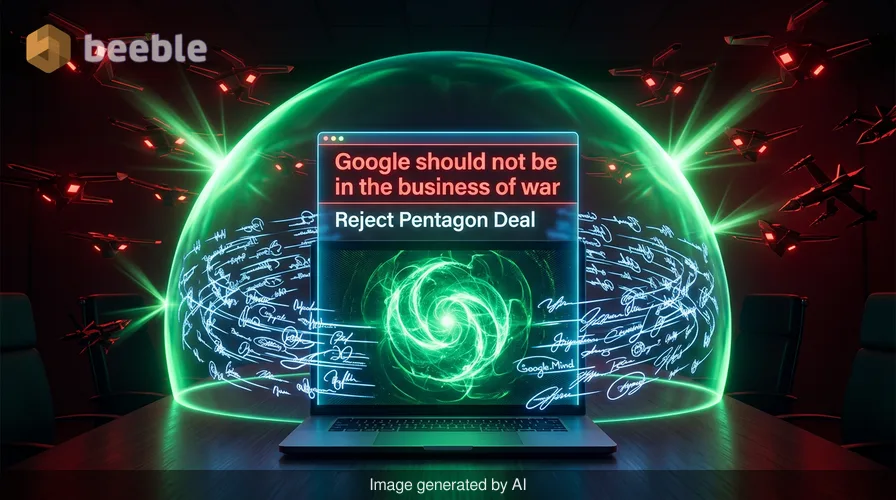

Ultimately, the Google employees' protest is an attempt to reclaim the human narrative in an increasingly automated world. They are arguing that Google should not be 'in the business of war,' a sentiment that feels both nostalgic and radical in the current geopolitical climate. Nostalgia, in this case, serves as a cultural anesthetic against the anxiety of an uncertain future; it harks back to an era when the 'Don't be evil' mantra felt like a genuine promise rather than a marketing relic.

Zooming out, this story is about more than just one company or one contract. It is about the systemic tension between the rapid pace of technological innovation and the slow, deliberate work of human ethics. It asks us to consider if we are willing to accept a world where our most advanced tools are used to erode the very civil liberties they were meant to enhance. Behind the scenes, the negotiators at the Pentagon and the executives at Google are weighing operational flexibility against human rights. But on the ground, the workers are reminding us that the code is never just code—it is a reflection of our collective values.

Food for Thought

As we navigate this complex intersection of technology and morality, we might consider the following reflections for our own digital lives:

- How often do we consider the 'archaeology' of the apps we use? Who funded them, and what were the original intentions behind their development?

- In our own professional lives, where do we draw our 'red lines'? Are we aware of how our mundane tasks might contribute to larger, potentially harmful systems?

- Can we reclaim a sense of agency in the 'attention economy' by demanding more transparency from the platforms that hold our most personal data?

- If we view our digital interactions as a 'patchwork quilt' of our identity, who do we want to be the one holding the needle?

As you close this tab and return to your daily routine, perhaps take a moment to observe the ubiquitous presence of AI in your surroundings. Question the deeply ingrained norm that technological progress must always come at the cost of ethical clarity. Sometimes, the most profound act of progress is the courage to say 'no.'

Sources:

- Bauman, Z. (2000). Liquid Modernity. Cambridge: Polity Press.

- Google AI Principles (Official Internal Document, Updated 2025).

- Open Letter from Google Employees to Sundar Pichai (April 2026).

- Statement by Dario Amodei, CEO of Anthropic, regarding Department of Defense negotiations (March 2026).

- U.S. Department of Defense, Directive 3000.09: Autonomy in Weapon Systems (Reviewed 2024).

See you on the other side.

Our end-to-end encrypted email and cloud storage solution provides the most powerful means of secure data exchange, ensuring the safety and privacy of your data.

/ Create a free account