Milan’s FaceBoarding System Proves That Efficiency Cannot Bypass Fundamental Rights

In the physical world, we are accustomed to showing a passport to a human agent, a brief exchange where we remain in control of our identity documents. Online and in automated transit zones, however, that dynamic shifts into something far more opaque. We are often asked to trade our most intimate data—the geometry of our faces—for the promise of a shorter queue. But as a recent decision by the Italian Data Protection Authority (the Garante) reveals, the price of that convenience is often higher than passengers realize.

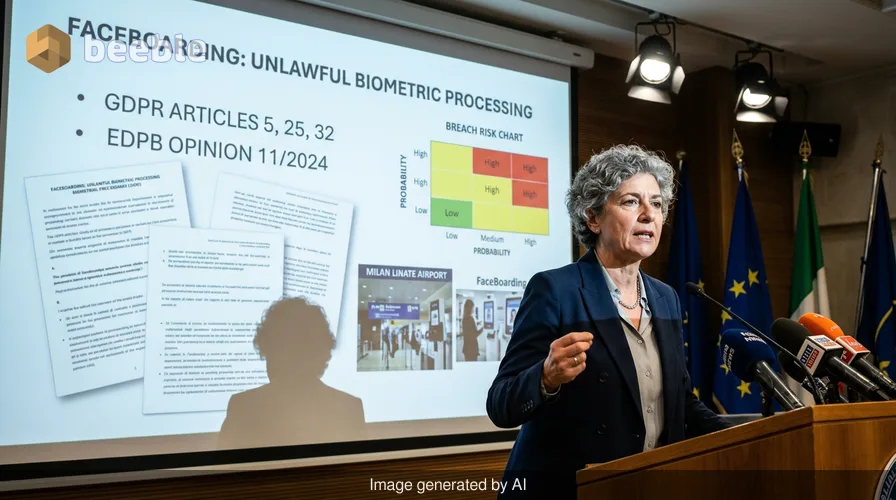

At Milan Linate Airport, the FaceBoarding system was marketed as a seamless leap into the future of travel. By scanning their faces, passengers could breeze through security and boarding gates without fumbling for paper or digital codes. Yet, the Garante’s investigation into SEA (Società Esercizi Aeroportuali) has pulled back the curtain on a system that failed to respect the very laws designed to protect us. From a compliance standpoint, the ruling serves as a stark reminder: innovation without a foundation of privacy is merely a sophisticated form of risk.

The Illusion of Consent

One of the most significant findings in the Garante’s decision was the lack of valid consent. In the world of data protection, consent is the key that unlocks the door to processing sensitive information. For biometric data—which the GDPR classifies as a special category because it is uniquely and permanently linked to an individual—this key must be turned by the user freely and explicitly.

In practice, SEA was found to have acquired facial images of passengers without obtaining the granular consent required by law. To put it another way, passengers weren't given a clear, informed choice; they were simply funneled into a system that treated their biological traits as just another piece of luggage. Under the GDPR framework, you cannot assume someone agrees to have their face digitized and stored just because they walk toward a specific gate.

A Foundation Built on Sand

Privacy by design is the foundation of a house. If you build a sleek, modern structure on a weak foundation, the entire building remains precarious. The Garante found that SEA failed to implement this principle, violating Article 25 of the GDPR. Instead of building privacy into the software from the first line of code, the system treated data protection as an afterthought.

This lack of structural integrity was most evident in the system’s security failures. The investigation revealed that SEA failed to encrypt the biometric templates—the mathematical representations of passengers' faces. In the hands of a malicious actor, an unencrypted biometric database is a toxic asset. Unlike a password, you cannot change your face after a data breach. Consequently, storing this data in a vulnerable state created an unacceptable risk of identity theft and unauthorized tracking.

The Problem of Digital Hoarding

Data minimization is a core tenet of digital hygiene. It suggests that organizations should only collect what they need and keep it only as long as necessary. SEA, however, opted for a policy of excessive retention. By keeping biometric data for longer than the immediate boarding process required, they turned a temporary convenience into a permanent digital footprint.

This practice directly contradicts the European Data Protection Board’s (EDPB) Opinion 11/2024. This recent guidance clarifies that for biometric systems in airports to be considered proportionate, the data should ideally remain under the passenger's control or be deleted the moment the specific purpose (like boarding a flight) is fulfilled. Keeping it longer transforms a security tool into a surveillance database.

Why This Matters for the Future of Travel

This ruling isn't just about one airport in Italy; it’s a compass for any organization looking to deploy facial recognition. The Garante has made it clear that the "cool factor" of new technology does not grant a license to ignore Article 32 (Security of Processing) or Article 5 (Principles of Processing).

Ultimately, the decision reinforces the idea that our biometric data is a fundamental human right, not a commodity to be harvested for operational efficiency. As we move toward more automated environments, the burden remains on the data controller to prove that their systems are robust, transparent, and, above all, respectful of the individual.

Practical Steps for Organizations and Travelers

For businesses looking to stay on the right side of the law, and for travelers wanting to protect their digital identity, here are the actionable takeaways from the Linate decision:

- Audit Biometric Workflows: If your organization uses facial recognition, verify that consent is explicit and granular. Users must be able to opt-out without penalty.

- Enforce Encryption: Biometric templates should never be stored in plain text. Use state-of-the-art encryption to ensure that even if a breach occurs, the data remains useless to intruders.

- Practice Data Minimization: Set strict auto-delete protocols. If the passenger has boarded the plane, there is rarely a lawful reason to retain their facial template.

- Review EDPB Guidelines: Ensure your systems align with Opinion 11/2024, which sets the current standard for biometric processing in high-traffic public spaces.

- Traveler Tip: Always look for the "analog" alternative. You have the right to choose traditional document checks over biometric scanning in most jurisdictions.

Sources:

- General Data Protection Regulation (GDPR), Articles 5, 25, and 32.

- Italian Data Protection Authority (Garante per la protezione dei dati personali) - Decision on SEA Milan Linate.

- European Data Protection Board (EDPB) Opinion 11/2024 on the use of facial recognition in airports.

Disclaimer: This article is for informational and journalistic purposes only and does not constitute formal legal advice. For specific compliance requirements, consult with a qualified legal professional or your Data Protection Officer.

See you on the other side.

Our end-to-end encrypted email and cloud storage solution provides the most powerful means of secure data exchange, ensuring the safety and privacy of your data.

/ Create a free account