Why Your Local Factory Might Soon Be Managed by a Robot with a 'Visual Scratchpad'

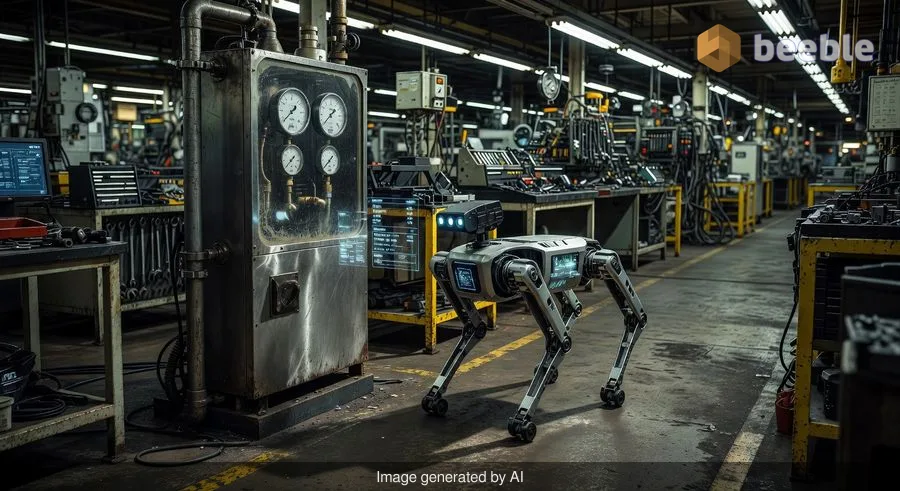

Imagine a tireless intern wandering through a sprawling industrial complex. This intern doesn’t need coffee, never gets bored of staring at the same pressure gauge for the thousandth time, and can now tell the difference between a slightly loose bolt and a catastrophic pipe failure with the precision of a seasoned engineer. This isn't a scene from a sci-fi reboot; it is the tangible result of the latest collaboration between Google DeepMind and Boston Dynamics.

On April 14, 2026, Google announced the release of Gemini Robotics-ER 1.6, a specialized AI model designed to give robots like the four-legged Spot 'embodied reasoning.' In simple terms, this means the robot isn't just a remote-controlled camera anymore. It is starting to understand the physical world it inhabits, moving from a simple tool to an autonomous inspector capable of reading analog dials and identifying tools in a cluttered room with near-human accuracy.

The End of the 'Blind' Robot

Historically, robots have been brilliant at being repetitive but terrible at being observant. If you programmed a robotic arm to spot-weld a car door, it would do it perfectly a million times. However, if that car door was shifted two inches to the left, the robot would likely keep welding thin air. This lack of adaptability has kept robots confined to highly controlled environments like assembly lines.

Under the hood of this new update is something Google calls 'agentic vision.' Think of this as a visual scratchpad. When the robot looks at a complex scene—say, a wall of 50 different analog gauges in an aging power plant—it doesn't just take a photo. It uses the AI model to 'point' at specific elements, execute small snippets of code to verify what it sees, and reason through the data.

Practically speaking, this has led to a massive leap in performance. The previous version of this model, version 1.5, only managed to read instruments correctly about 23 percent of the time. The new 1.6 model has spiked that accuracy to a staggering 98 percent. For the average user, this is the difference between a GPS that occasionally tells you to drive into a lake and one that navigates a complex five-way intersection without breaking a sweat.

Why Analog Gauges Still Matter in a Digital World

It might seem counterintuitive to spend millions of dollars teaching a high-tech robot dog how to read a 50-year-old analog thermometer. Why not just replace the thermometer with a digital sensor that sends data to the cloud?

Looking at the big picture, the global industrial backbone is incredibly resilient—and incredibly old. Replacing every manual valve, sight glass, and pressure gauge in a refinery or a Hyundai automotive plant would cost billions and require months of downtime. It is far more scalable to give a robot the 'eyes' to read existing equipment than it is to rebuild the world to suit the robot.

This is where the partnership with Boston Dynamics becomes critical. Their robot, Spot, is already being trialled in facilities owned by the Hyundai Motor Group. By using Gemini Robotics-ER 1.6, Spot can now perform 'multi-view reasoning.' It can use its various camera streams to understand its environment in 3D, ensuring it doesn't just see a gauge, but understands where that gauge sits in relation to the rest of the machinery.

Solving the 'Hallucination' Problem

One of the biggest hurdles for AI in the physical world is 'hallucination'—the tendency for models to confidently assert something is there when it isn't. In a chatbot, a hallucination is a funny quirk; in a heavy industry setting where a robot is monitoring volatile chemicals, a hallucination is a safety nightmare.

Google’s testing showed that the 1.6 model is much better at staying grounded in reality. In a test involving a cluttered table of tools, the older model 'saw' a wheelbarrow that didn't exist simply because it was asked to look for one. The new model, conversely, correctly identified the hammers, scissors, and pliers while ignoring the 'trick' question. This improved accuracy is foundational for moving robots out of the lab and into the messy, unpredictable real world.

| Feature | Gemini Robotics-ER 1.5 | Gemini Robotics-ER 1.6 | Gemini 3.0 Flash |

|---|---|---|---|

| Instrument Reading Accuracy | 23% | 98% | 67% |

| Visual Reasoning | Basic | Agentic (Visual Scratchpad) | Standard |

| Safety Constraints | Manual | Integrated/Systemic | General |

| Hallucination Rate | High | Low | Moderate |

Safety First: The Robot as a Guardian

Beyond just reading dials, the new model is described as Google’s safest yet. It has been trained to understand physical safety constraints, such as how to handle liquids without spilling them or how to navigate around humans.

To put it another way, the AI is learning the 'common sense' rules of the physical world. It can now perceive the risk of injury in complex scenarios—like recognizing that a child near an electrical outlet is a high-risk situation. While we are still far from a robot having a human-level understanding of ethics, these incremental steps toward 'embodied reasoning' are essential for the decentralized future of robotics, where machines work alongside us rather than behind a safety fence.

What This Means for You

From a consumer standpoint, you likely won't have a Spot dog reading your home thermostat anytime soon. However, the downstream effects are significant.

- Lower Costs, Fewer Failures: As industrial facilities become more efficient and less prone to human error or equipment failure, the cost of manufacturing goods—from cars to electricity—becomes more stable.

- The Democratization of Vision: The 'agentic vision' tech developed here will eventually trickle down into consumer devices. Imagine a smartphone app that doesn't just take a photo of your fuse box but tells you exactly which switch is tripped and why.

- Safety Standards: We are seeing the birth of a new safety framework for AI. As these models learn to respect physical boundaries, they set the stage for more advanced home assistants and delivery robots that are genuinely safe to be around.

Ultimately, this isn't just about a robot dog looking at a thermometer. It’s about the merging of digital intelligence with physical presence. We are moving toward a world where the 'digital crude oil' of data is being extracted and refined by machines that can finally see the world as clearly as we do.

As you go about your day, take a moment to look at the invisible industrial mechanics around you—the pipes in your basement, the meters on the side of your house, the complex machinery in the back of a grocery store. For decades, these have required a human pair of eyes to stay safe. We are now entering an era where those eyes never blink, never tire, and—thanks to a visual scratchpad—rarely make a mistake.

See you on the other side.

Our end-to-end encrypted email and cloud storage solution provides the most powerful means of secure data exchange, ensuring the safety and privacy of your data.

/ Create a free account